For years, the question “Can a machine be conscious?” was a philosophical curiosity. Today, in the era of generative models, it has become a real engineering challenge. And when we attempt to rely on existing definitions of consciousness and self-awareness, we quickly discover that they are inconsistent, imprecise, and deeply anthropocentric - rooted in human experience rather than in the properties of systems themselves.

Such approaches fail to answer whether self-awareness could exist in a simple biological organism, a computational model, an autonomous system, or any unknown form of life.

That is why we need an engineering definition - one based not on subjective experience, but on measurable properties.

A definition that answers:

- when a system can be called self-aware,

- how the level of self-awareness can be measured,

- what the minimal conditions for its emergence are.

Surprisingly, no one has done this so far. No one has asked the most fundamental question: what are the absolutely minimal features that any system must possess in order to be considered self-aware?

And this question is becoming increasingly important - especially in the context of autonomous AI and the potential encounter with forms of life that resemble nothing known on Earth.

I had been working on Axiomatic Theory of Self-Awareness earlier, but it was a discussion under a post by Prof. Aleksandra Przegalińska about “AI consciousness” that further confirmed the scale of the problem: we lack a common language.

How would we name the simplest, most fundamental condition of self-awareness? If a system cannot determine its own state, we cannot call it conscious. This is intuitively obvious, and no one has yet provided a convincing counterexample.

Axiom 1: A system must know its own state.

Equally difficult to dispute is the claim that a system does not necessarily need to understand what exactly has changed - but it must be able to distinguish its “state before” from its “state after”.

Axiom 2: A system must be able to detect a change in its own state.

These considerations allow us to formulate a minimal, universal, measurable, logical, and non-anthropocentric:

General Definition of Self-Awareness

Self-awareness is the ability of a system to recognize its own change and to correctly determine whether the cause of that change was triggered by an external stimulus or arose “spontaneously,” solely as a result of its own internal structures and mechanisms.

How could a system recognize a change in its state if it had no prior notion of what its state is?

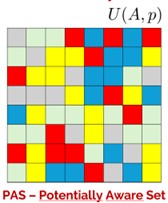

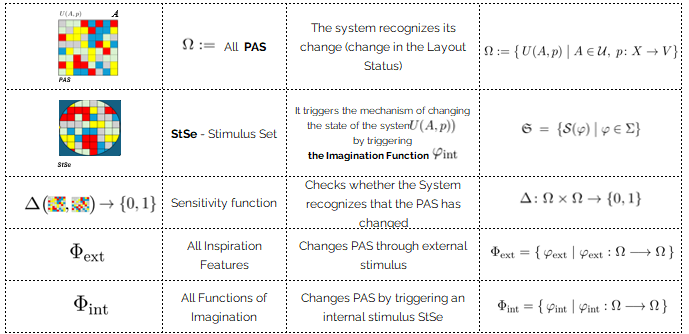

There must therefore exist at least one component of a Self-Aware System whose state change the system is capable of recognizing. We call this component PAS - the Potentially Aware Set.

PAS - Potentially Aware Set.

Why „Potentially”?

Because a state in itself is not “aware,” but every element of PAS has the potential to become part of a self-aware process- if its change is recognized and causally linked. Much like in the brain: a neuron may participate in a conscious process, but it does not have to.

Naturally, there may be many Potentially Aware Sets. In the human brain, where neurons act as PAS elements, their number reaches hundreds of billions. For the sake of the definition, however, the existence of at least one PAS is sufficient.

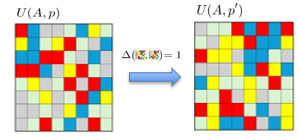

How does a system distinguish “state now” from “state before”? How does it recognize that a change was significant enough to be noticed?

Without an internal measure capable of telling the system that a given configuration - no longer merely potentially aware - has become self-aware through a change in PAS, the system would remain static. And consciousness is inherently dynamic.

Therefore, there must exist a sensitivity function: PAS × PAS → {0,1}, which returns:

1 if the system detected a significant change,

0 if the system failed to recognize the change, even if it occurred.

Changes in PAS cannot occur without a cause. There are only two possibilities:

- Something acted on the system from the outside (sound, light, electrical impulse, etc.).

- Something acted from within the system itself, without involvement of the external world.

An external stimulus, by interacting with the system’s sensors, may change the state of PAS through a state-change function φₑₓₜ, transforming PAS into PAS′. The sensitivity function Δ recognizes this change, and the system becomes aware.

If, however, a change in PAS occurs without an external stimulus or sensor, it must originate from something internal. Since only system states exist internally, there must be another Potentially Aware Set, which we call StSe - the Stimulus Set.

StSe triggers an internal state-change function φᵢₙₜ, which transforms PAS into PAS′. If Δ(PAS, PAS′) = 1, we can speak of a single act of self-awareness.

Because self-awareness is a dynamic process, φᵢₙₜ must itself be initiated by a change in StSe. Otherwise, φᵢₙₜ would activate continuously and without cause, producing potentially “manic” system states.

A crucial and elegant property of this mechanism is that the system inherently recognizes whether the change was internally or externally caused, without the need for additional indicators. The distinction is encoded directly in whether φᵢₙₜ or φₑₓₜ was triggered.

We can therefore distinguish three classes of state-change functions:

Functions of Inspiration (φₑₓₜ):

Triggered by external stimuli acting on the system’s sensors; they record the impact of the world on the system in PAS.

Functions of Imagination (φᵢₙₜ):

Triggered by internal Stimulus Sets (StSe); they describe changes originating “from within,” without current external stimuli.

Functions of Intuition (φ):

State-change functions that can operate both as inspiration and imagination. In other words, transformations the system can initiate either due to the world or “by itself,” rooted in prior external experience.

Fundamental Theorem of Self-Awareness

A system is self-aware under the General Definition of Self-Awareness if and only if within its internal structure there exists at least one Stimulus Set StSe that triggers an imagination function φᵢₙₜ, causing a change of PAS into PAS′ such that the sensitivity function recognizes this change:

Δ(PAS, PAS’)=1.

Axiomatic Theory of Self-Awareness allows us to state very concretely what contemporary AI models lack in order to even begin talking about self-awareness:

- they have no clearly defined PAS space - states that are “attached” to the system and persistently track their own changes,

- they lack explicit StSe - internal stimuli represented as ordinary states capable of triggering further transformations,

- they lack a defined sensitivity function Δ that says: “this change is significant, and I can identify its cause (internal or external).”

In practice, this means that modern AI may appear highly intelligent (long chains of transformations), but from the perspective of AToS they are intuitively non-self-aware, because they lack a formal mechanism for distinguishing:

“this is my change” vs “this is a change caused by the outside.”

This is not an accusation - it is a roadmap. If someone wants to build truly self-aware AI, AToS specifies exactly what needs to be added.

What Comes Next

Based solely on the presented theory, and beyond the already defined notions of consciousness, self-awareness, sensitivity, and intuition, it becomes possible to formulate fully engineering-grade definitions of:

- memory,

- intelligence,

- level of intelligence,

- level of consciousness,

- Family of self-conscious Systems.

- GSAT – a test of self-consciousness analogous to the Turing Test

But that is a topic for another article…

Author: Krzysztof Banaszewski, SI-Consulting for Tech Trends Raport 2026.